TL;DR: A data platform runs workloads — it doesn’t make data trusted, governed, or reusable. Without a data management layer, AI amplifies every unresolved definition dispute and quality gap. “Manage it later” is the most expensive sentence in modern data strategy. The winners in the AI era will manage meaning at scale.

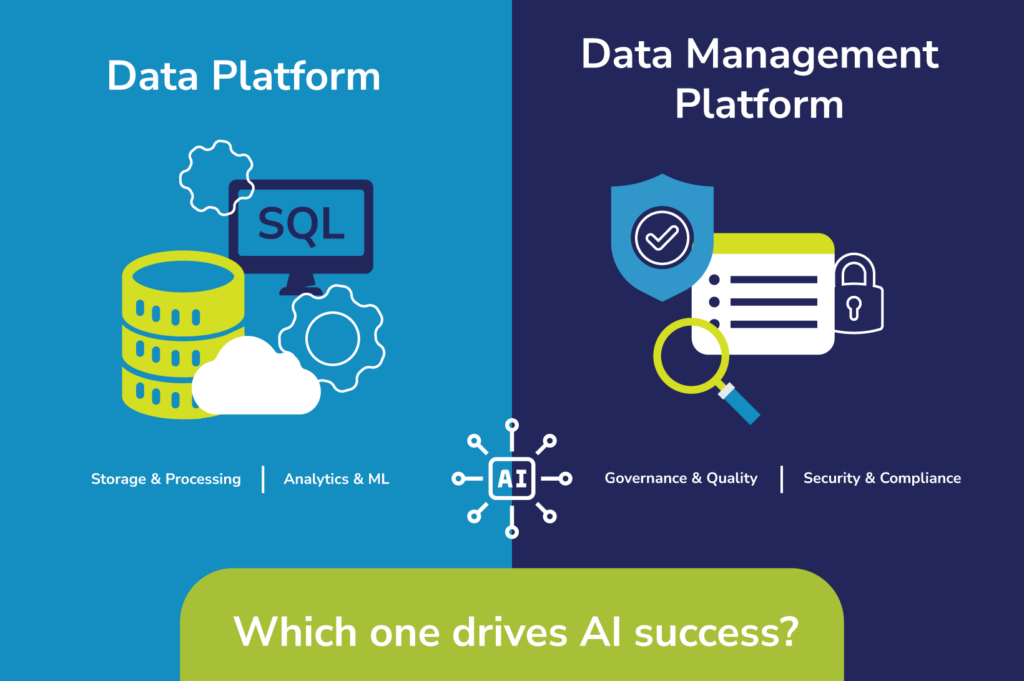

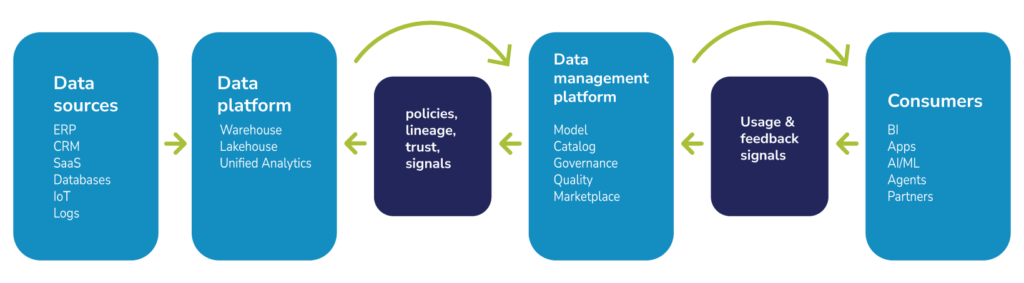

A data platform and a data management platform are not interchangeable. A data platform is the execution layer where data is stored, processed, and served for analytics and AI (warehouse, lakehouse, unified analytics stack). A data management platform is the control plane that makes data understandable, trusted, governed, and reusable, often across multiple data platforms and teams. Understanding the difference between a data platform vs. data management platform is essential before investing in either.

Quest Software’s February 2026 launch of the Quest Trusted Data Management Platform usefully spotlights the distinction because its core design is explicitly about turning raw data into reusable, trust-scored, AI-ready data products through converged capabilities like modeling, cataloging, governance, quality, and a marketplace.

Introduction

Enterprises keep buying world-class data platforms and then acting surprised when they still can’t agree on “revenue,” still rebuild the same datasets in every department, and still fear what an auditor might find. They didn’t buy a strategy; they bought an engine, and then assumed horsepower would create alignment.

Generative AI is now exposing the lie behind “manage it later.” A warehouse can scale compute. It cannot, by itself, scale meaning. If “customer” and “order” are contested across functions, AI doesn’t reconcile the dispute, it amplifies it, faster. Gartner1 has explicitly warned that a meaningful share of GenAI projects will be abandoned after proof of concept because of factors that include poor data quality and inadequate risk controls, issues that are fundamentally about trust and governance, not compute.

“Data platforms are necessary. They are not sufficient.”

Data platforms as execution engines

A data platform runs data workloads. It connects to sources, ingests data, stores it, transforms it (batch and streaming), and serves it to reporting, analytics, and machine learning. Its economic promise is operational leverage: elastic scale, simplified operations, and improved price-to-performance.

- Google BigQuery: Google frames BigQuery as an “autonomous data to AI platform” that automates the data lifecycle from ingestion to AI-driven insights.

- Amazon Redshift: AWS describes Amazon Redshift as a “fully managed petabyte-scale data warehouse service in the cloud” that “lets you access and analyze data without all of the configurations of a provisioned data warehouse.”

- Microsoft Fabric: Microsoft positions Fabric as a “unified, AI-powered data platform to simplify data management and analytics.”

- Databricks Data Intelligence Platform: Databricks positions its Data Intelligence Platform as “built on a lakehouse to provide an open, unified foundation for all data and governance.”

- Snowflake AI Data Cloud: Snowflake positions its AI Data Cloud as a “fully-managed, enterprise-ready platform” for accelerating “time to data and AI innovation.”

Data platforms are necessary. They are not sufficient.

In heterogeneous enterprises — multiple clouds, engines, SaaS sources, and legacy systems — one platform can make data available, but it does not automatically make data findable, compliant, high-quality, and semantically consistent across teams.

That requires a distinct set of management capabilities, and Gartner’s “data ecosystem” framing2 reinforces the point: interoperability plus shared governance and metadata practices are required to unify distributed components into a coherent whole.

“A data management platform makes data usable at enterprise scale.”

| Dimension | Data Platforms | Data Management Platforms |

| Core question | Where and how do we store, compute, and serve data? | How do we ensure data is understood, trusted, governed, and reusable? |

| Primary artifacts | Tables/files, marts, feature stores, compute jobs | Metadata, semantic models, policies, lineage graphs, quality/trust signals, data products |

| What they optimize | Performance, elasticity, operations efficiency, price/performance | Trust, transparency, compliance, reuse, reduced integration debt |

| Typical success metric | Faster time to insight; lower runtime cost per workload | Higher adoption of trusted data; fewer incidents; less rework; faster reuse |

| Failure mode if missing | Workloads are slow/expensive or hard to scale | “Data swamp”: duplicated datasets, inconsistent definitions, and low trust |

Data management platforms as control planes

A data management platform makes data usable at enterprise scale. When weighing a data platform vs. a data management platform, the key distinction is this: where a data platform is an execution engine, a data management platform is a control plane that operationalizes modeling and semantics, cataloging and lineage, governance and access workflows, and continuous data quality signals. Increasingly, it also operationalizes “data products,” meaning it packages technical assets with ownership, policies, and trust indicators so consumers can self-serve safely instead of renegotiating meaning every time they use a dataset.

Quest Software’s launch of the Quest Trusted Data Management Platform is an explicit control-plane bet. Quest describes a unified, SaaS-native platform that brings together data modeling, data cataloging, data governance, data quality, and a data marketplace, and adds an Automated Data Product Factory to reduce trusted data product creation from months to days. The Quest platform includes a converged set of capabilities, including trust scoring (with multiple measurable components) to make trust visible to consumers and reduce “can I trust this data?” friction.

The costs of low-quality data in high-stakes decisions

Bad data is not free. And neither is a collection of point solutions.

The costs of poor data quality

Forrester3 has stated that poor data quality can cost some organizations up to $25 million per year or more. Those losses rarely appear as a single line item; they are spread across rework, delays, customer-impacting mistakes, and compliance exposure. When you adopt a data management platform, you are not just “adding governance” — you are changing the unit economics of every downstream analytics and AI initiative by reducing the repeated costs of chaos and confusion.

The costs of fragmented point solutions

Tool sprawl and integration debt add another lever. Gartner4 has cited that organizations can save up to 50% on costs and operational effort through converged platforms, partly because enterprises often deploy “a dozen” overlapping data management solutions.

The value of trusted data in every decision

The results we have seen with Quest’s platform align with the consolidation logic: up to 54% faster time to data product delivery and up to 40% cost savings, while also improving reuse by controlling data product sprawl.

Even if you discount “up to” numbers, the direction is economically intuitive:

- Fewer point tools

- Fewer brittle handoffs

- Fewer duplicate pipelines

- Fewer places for trust to break

A practical internal business case

A practical internal business case can start with time pricing. Consider 20 data engineers and analysts at a fully burdened $180,000 each: $3.6 million per year in labor. If only 10% of time is consumed by hunting definitions, validating data, and rebuilding datasets that already exist, that’s $360,000 per year of friction, before counting risk reduction, faster product delivery, and the downstream opportunity cost of delayed decisions. The central claim of data management platforms is that they reduce this rework loop by making trust visible and reuse the default.

The launch of the Quest Trusted Data Management Platform is a reminder that the winners in the AI era will not be the companies with the most data in the cloud — they will be the companies that can manage meaning at scale and therefore move fast without breaking themselves.

The value of a converged data management platform

The Quest Trusted Data Management Platform’s promise is not “more data.” It is fewer reinventions of the same data, fewer risky or noncompliant uses of sensitive data, and fewer stalled projects caused by distrust — achieved by moving from “datasets everywhere” to managed, trust-scored data products with reusable governance context.

How the layers work together

Gartner2 has found that enterprises are moving toward data ecosystems and that cloud providers are responding with more integrated experiences, fueling the rise of converged data management platforms designed to reduce time to market and minimize integration debt. Even if the layers converge in product packaging, the architectural distinction remains practical: one layer runs workloads; the other layer operationalizes meaning, control, and fitness-for-use.

This flow also explains why “data products” have moved from theory to operating model. Gartner5 defines a data product as “a curated, self-contained asset that combines data, metadata, templates, and implementation logic” and is “consumption-ready, maintained to agreed-upon SLAs, governed by data contracts, and certified for reuse.” This is the operating model target the Quest platform is designed to industrialize.

Conclusion

The most expensive sentence in modern data strategy is “We’ll govern it later.” That sentence turns dashboards into debates, models into credibility crises, and audits into fire drills.

Data platforms will keep getting faster, cheaper, and more AI-native. That is not the bottleneck. The bottleneck is whether your organization can consistently answer four questions:

- What data do we have?

- What does it mean?

- Can we trust it?

- And can we reuse it safely?

The launch of the Quest Trusted Data Management Platform is a reminder that the winners in the AI era will not be the companies with the most data in the cloud — they will be the companies that can manage meaning at scale and therefore move fast without breaking themselves.

Sources:

- Gartner, “Gartner Predicts 30% of Generative AI Projects Will Be Abandoned After Proof of Concept By End of 2025,” July 2024

- Gartner, “Data Ecosystems to Support All Your Data Workloads,” n.d.

- Forrester, “Data Culture And Literary Survey, 2023,” November 2023

- Gartner, “Market Guide for Data Management Platforms,” September 2025

- Gartner, “How to Build and Manage Data Products,” November 2025

GARTNER is a trademark of Gartner, Inc. and/or its affiliates.

Gartner does not endorse any company, vendor, product or service depicted in its publications, and does not advise technology users to select only those vendors with the highest ratings or other designation. Gartner publications consist of the opinions of Gartner’s business and technology insights organization and should not be construed as statements of fact. Gartner disclaims all warranties, expressed or implied, with respect to this publication, including any warranties of merchantability or fitness for a particular purpose.