Microsoft Teams security is a hot topic. Four reasons are making this a top priority:

- Teams is a gateway into your business. As a service, Teams is tightly integrated with Microsoft 365 and leverages critical applications such as SharePoint Online, Exchange Online, OneDrive for Business, and Azure Active Directory (Azure AD). The unifying end user client sits on top of these workloads to fuel effective collaboration, but this also represents an entry point into the business that IT administers need to secure to protect the assets it has access to.

- Teams is popular. Microsoft Teams had ~20 million daily active users in November of 2019, then 44 million four months later, then 75 million six weeks after that. A user base growing that fast is generating a lot of valuable enterprise data that is an appealing target for bad actors.

- Teams is a moving target. Teams has surged as a must-have tool for the work-from-home era, eclipsing longtime-favorite collaboration tools like Slack. A steady stream of new features makes life easier for your co-workers, but it also offers more points of potential attack.

- Teams runs your business. Organizations of all sizes and shapes now depend on Teams for communication, collaboration, and meetings. Business continuity now depends on it. A compromised Teams deployment will compromise your ability to do business.

This post will provide a primer on what it takes to keep Microsoft Teams secure while still maximizing end-user collaboration in the Teams service.

Security before the cloud

In the days before cloud computing, you used to rely on your company’s firewall as a secure perimeter. You ran your application servers, file servers, Exchange Server, SharePoint Server and maybe Skype for Business Server all behind a firewall in your data center. Your goal was to keep good stuff in and bad stuff out, and a key pillar of security was the firewall configuration.

What kind of work were your users doing inside the firewall? Two main kinds:

- communicating by phone, message, private chat, group chat and email

- working on files like documents, spreadsheets, presentations and images

That makes up about 95% of the workday, for most of us.

Since the advent of cloud offerings like Microsoft Office 365, users can communicate and work on files outside of the data center. Instead of having to run their own servers and firewall, companies subscribe to cloud services for products like OneDrive, Exchange, SharePoint, Office 365 and Skype for Business. In this scenario, there is no firewall and we must shift to focus on securing the people, not the perimeter. This starts with securing the most important aspect of that user – their credentials. Strong passwords and multi-factor authentication are now basic minimum requirements. From here, we can leverage cloud security services that ensure users are connecting only from specific known locations and on approved devices. That’s better security than a firewall and simple username and password.

But Microsoft Teams security goes much further than that.

Is Microsoft Teams secure?

Teams is designed around four main functions:

- Chatting

- Meeting

- Calling

- Collaborating

All four of those functions align with the co-worker communication mentioned above. The fourth one, collaborating, aligns with working on files. In other words, Teams bundles communication and file sharing, and the essence of Microsoft Teams security is ensuring that that communication and file sharing occurs only among known, authorized users, who should have access to the data.

So, is Microsoft Teams secure?

The short answer is “Yes, it is secure.”

Microsoft Teams is designed to be secure, but like the doors and windows on your house, you need to employ the locks in a way that strikes the best balance between security and ease-of-use.

As an administrator, the most basic but significant lock on the front-door is the underlying identity of the user account. One advantage of all Microsoft 365 applications, including Teams, is that the user identity resides in Azure AD. Recent advancements of Azure AD identity security capabilities are a big leap forward in security for all applications that use it. Features such as configurable MFA account options, account lockout settings, and support for Single-Sign-On across applications are basic capabilities that have evolved to be very effective pillars of identity security. Premium Azure AD management features such as Identity Protection uses AD leverage account activity signals to identify, detect and investigate advanced threats in all Microsoft cloud applications, including Teams.

More advanced Azure AD Privileged Identity Management features, such as conditional access, can also leverage those signals to fortify privileged identities which offer ‘keys to the kingdom’.

Microsoft 365 Group Membership

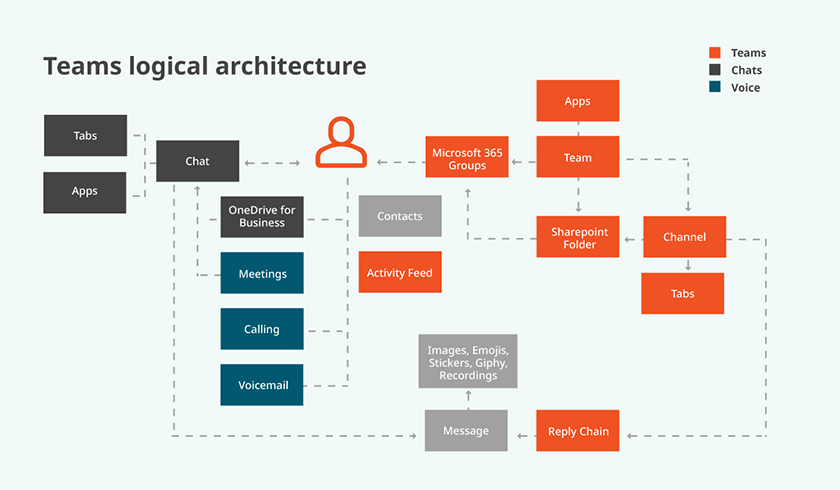

Another important Microsoft Teams security pillar is the Microsoft 365 Group. Each team is associated with a Microsoft 365 Group. The membership of that group determines ‘who’ can access ‘what’ in a team. In the Teams architecture diagram below, access to team collaboration (channel chats, files, etc.) depicted in the orange boxes is governed by the membership to the underlying Microsoft 365 group.

In other words, access to the data and capabilities of a team is governed by the owners and members of that team, which is determined by their membership in the associated Microsoft 365 Group.

Once the underlying identity is fortified, and the appropriate team and group memberships in-place, the security of the Microsoft Teams application itself can be strengthened by configuring cloud settings.

Securing the Teams application

Once the user identities are secure, there are several areas of the Teams application and service which should be reviewed and configured to stay secure.

Security through Teams lifecycle best practices

Although not directly related to security, implementing good Teams hygiene around the lifecycle of individual teams (e.g. creation, use, and expiration) will reduce the attack surface for a potential security breach.

Here are some rules of thumb for ensuring your business collaboration platform is secure:

- Enforce rules — Make sure all teams have more than one owner. Ownerless teams breaks the chain of responsibility for the data and security settings for that team.

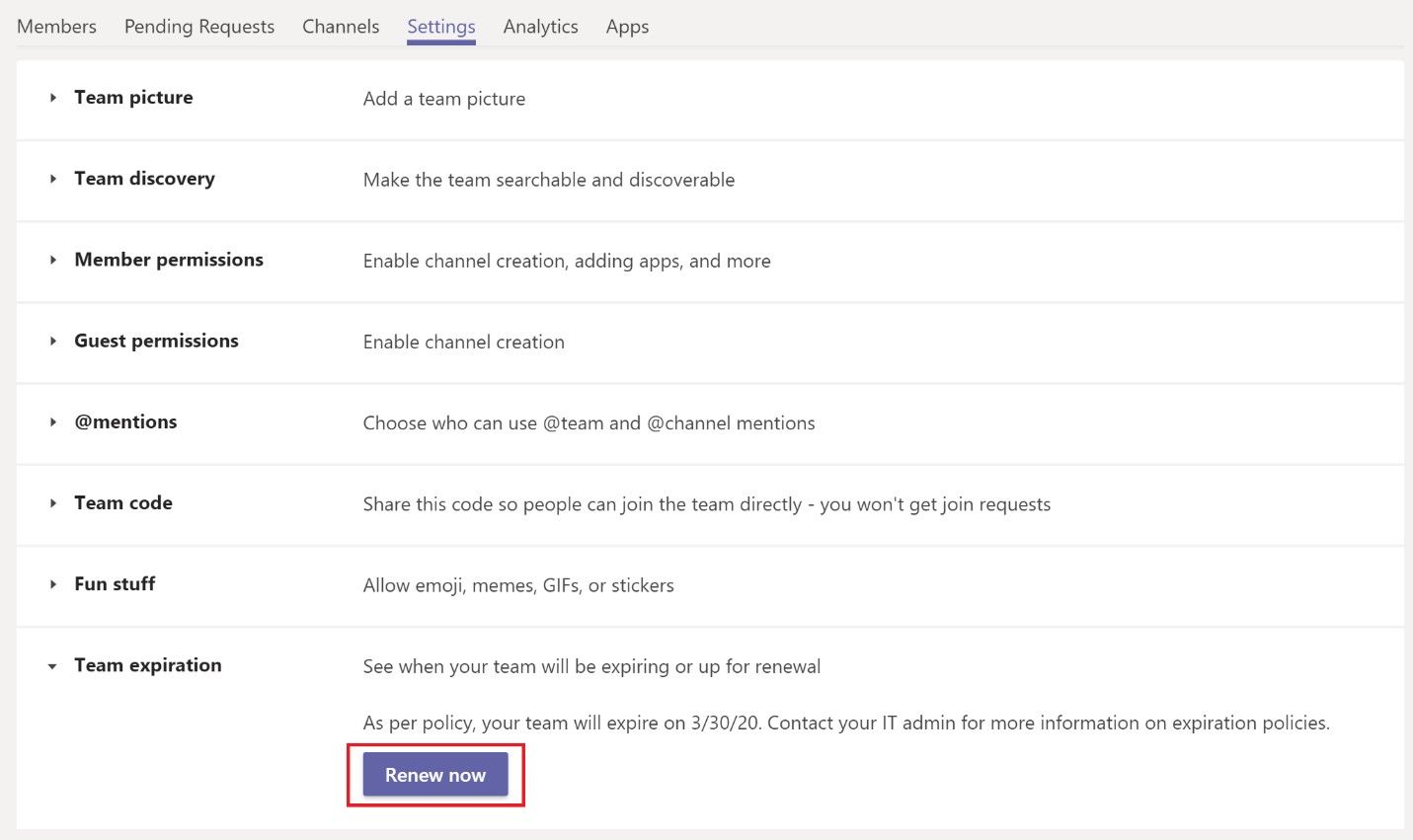

- Apply expiration policies — The longer a team is in use, the more data it accumulates. Any sensitive information or intellectual property the team stores represents a security risk, especially if the team outlives its useful purpose and the data is just sitting there. All guest and external access remains intact unless you as the admin change the status of the team. Expiration policies allow team owners to renew the group if it’s still needed.

External access

In many enterprises most users are now outside of a firewall, communicating and working on files with your fellow employees. Why not communicate and work on files with users from other organizations? Security in Microsoft Teams is designed to allow that in two ways: external access and guest access.

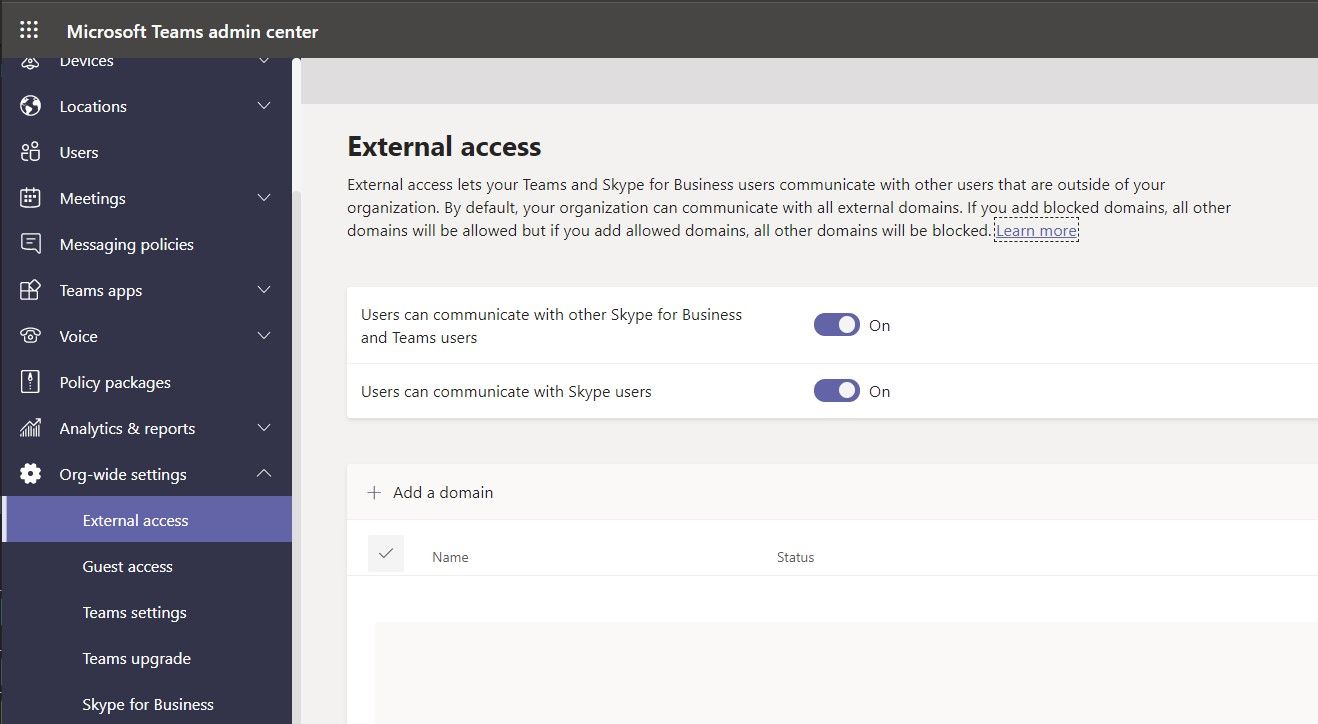

External access is also called federation. When enabled, you can allow Teams users from an external domain (like their-company.com) to collaborate with people in your domain (like your-company.com). It’s useful when baker@their-company.com and charlie@your-company.com need to work with each other. They can also bring other co-workers in.

By default, external access is enabled in Teams. That means that your users can collaborate over Teams with all external domains. Regulated organizations in particular will want to keep an eye on governing that setting.

Some organizations may be tempted to disable external access altogether; in pre-cloud terms, why not just disable access to an application from outside your firewall? External communication is a significant part of the business and competitive advantage of Teams, and Microsoft Teams has controls to govern this.

Suppose you want your users to contact people only in specific businesses other than yours. Teams provides options for three common scenarios:

- Open federation — This default setting allows users in your organization to find, call, chat and schedule meetings with users from other domains.

- Allow specific domains — This setting limits external access to the domains you specify and blocks access to users from all others. You might use this for trusted partners, vendors and customers.

- Block specific domains — This allows external access to/from all external domains except the ones you specify here. You might use this for known competitors and parties that represent some sort of risk to your organization.

For this setting, and any security configuration, it’s a good idea to take inventory of your settings and create a baseline, and then monitor for changes or configuration drift. Also, you can monitor end-user external communication and file sharing, and configure Teams external access over time as the needs of the business change.

Guest access

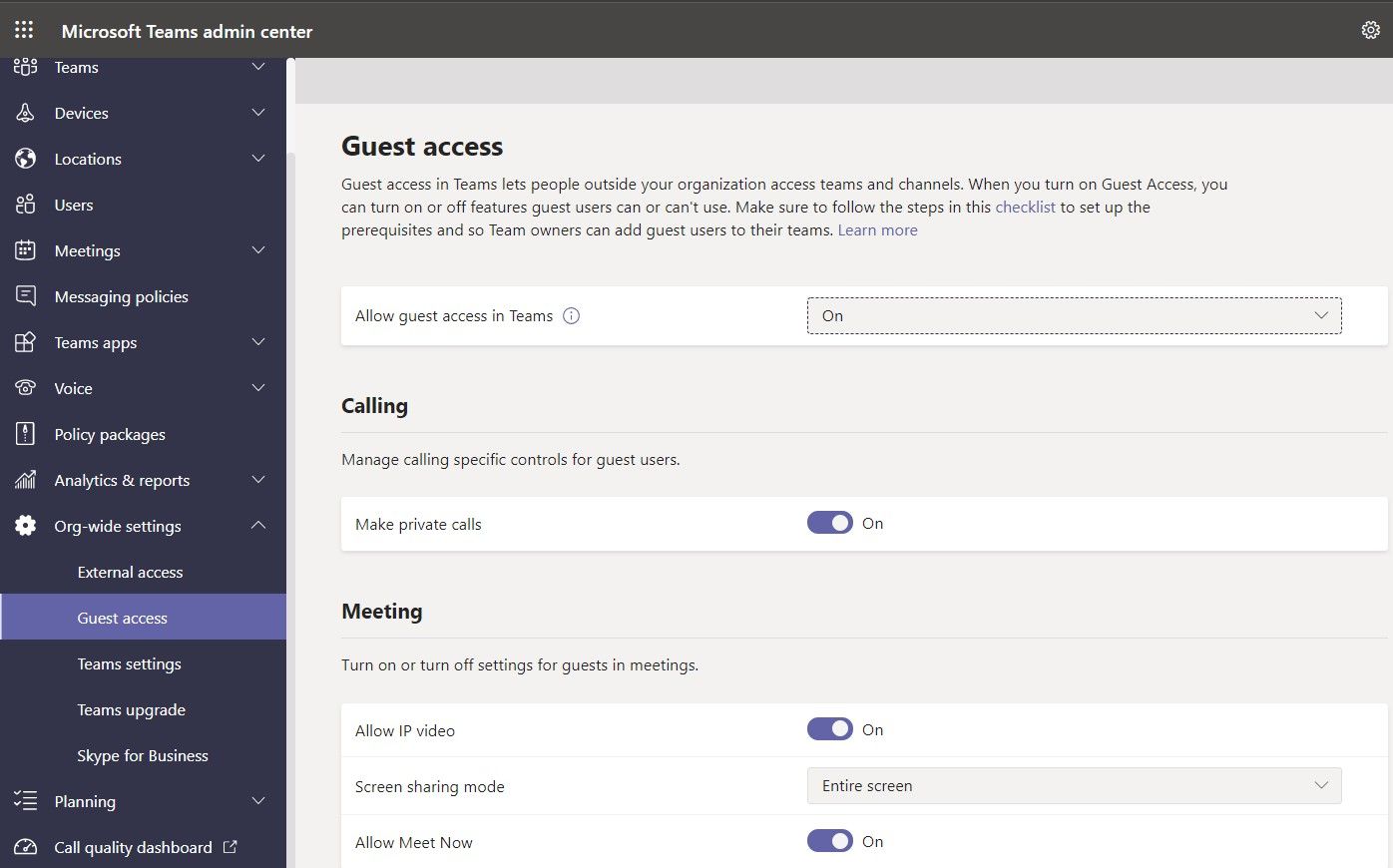

A Microsoft Teams guest user is a user outside of your organization who, through a guest invitation process, is given an Azure AD guest account within your Azure AD tenant. With Teams guest access, you can grant access to individuals outside of your organization, and govern access to your enterprise data.

In effect, there are two big on/off switches at the tenant level that controls whether guests have access to the teams in your Teams tenant. One switch is a Microsoft Teams tenant setting that resides in the Teams Admin Center. The other switch resides in the Microsoft 365 Portal and governs guest access to the underlying Office 365 groups used by individual teams. If both are enabled, guest access to specific teams can be disabled by an administrator using PowerShell to disable guest access to the associated Microsoft 365 group.

If guest access is enabled at the Teams tenant level, administrators can further govern what capabilities guests have as shown here in the Teams Admin Center:

Once guest access is enabled in a team, finer granular control rests at the team level through settings that the team owner enables or disables. For example, the owner can specify whether guests are able to edit or delete messages in channel posts. That’s an important setting when it comes to having a permanent record of communication.

As an admin, you’ll want to know which teams have and don’t have guest access enabled. Again, it’s useful to baseline this configuration, especially for permissions against an eventual security breach, so you know which guests have access privileges. PowerShell, Graph, or a third-party reporting solution are all good methods to baseline your guest access configuration based on your needs.

Securing Teams with policies

Microsoft Teams has administrative policies which govern the capabilities of all major collaboration features. This includes policies that govern chat, meetings, live events, Teams apps, devices, calling, and the ability to create private channels.

Administrators can define global policies and additional specific policies that are applied to specific individuals or groups of users. The most granular policy takes effect, so a policy applied to a user object will override the settings defined at the global level.

Each global policy should be reviewed and baselined.

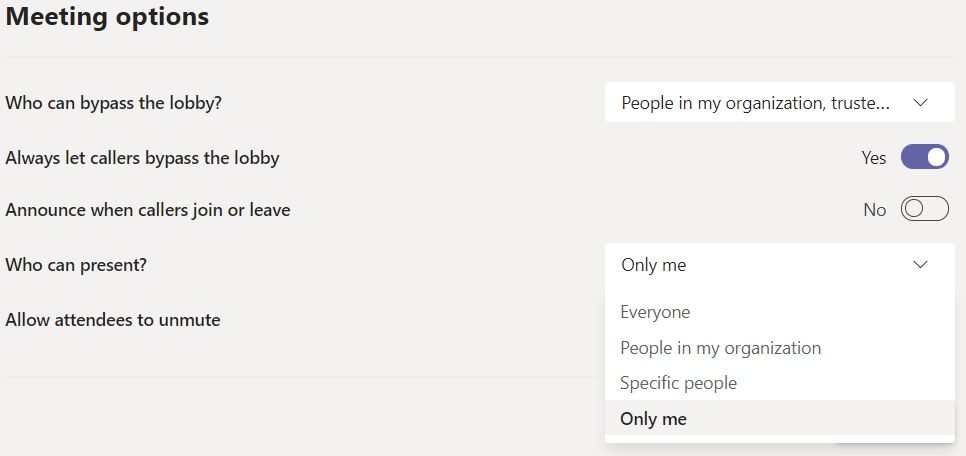

For example, the global meeting policy governs whether meetings can be recorded and stored in the cloud, and whether users outside of the organization can take control of the content sharing, start a meeting without the host, and have presenter rights in the meeting.

These settings might seem docile, but consider an internal meeting at which you’ll share, say, sales updates and product plans. You will want to carefully control whether anonymous users and guests outside the organization can join and see shared content and video. The administrative meeting policies applied to the host of the meeting govern these meeting options when a user creates a Teams meeting:

Securing Microsoft Teams apps

Teams is a platform built to amplify collaboration by integrating applications and data. To do this, it allows users to install custom Teams apps which can then be integrated and used directly within Teams. The Microsoft Teams App Store offers Teams apps for each device platform that fall into three types:

- First-party applications provided by Microsoft — For example, the Communities app allows Yammer integration, and the Planner app featured in Teams lets users build and manage team tasks.

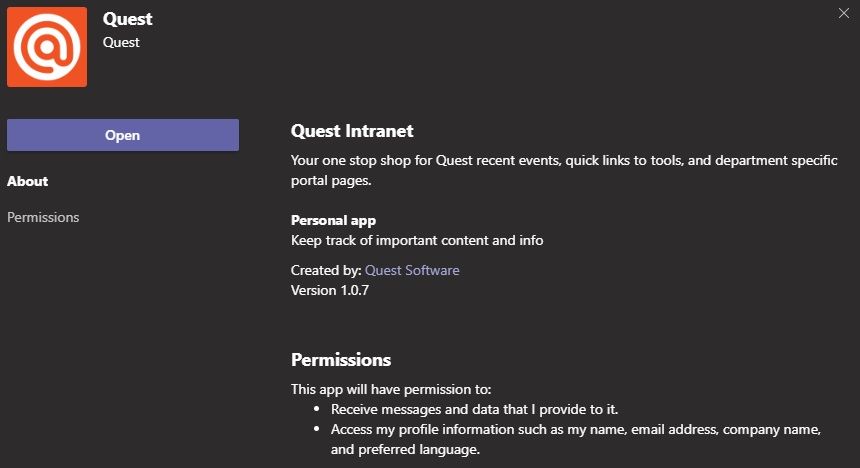

- Third-party applications — Independent software vendors write applications to integrate their functions with Teams. To date, certification is largely a self-certification process; meaning Microsoft does not independently research and “certify” the security of every app. While most Teams apps in the Teams store are transparent about what they do, and what data they access, keep in mind that some applications access user and enterprise data such as mail and calendar info. Other apps are web snap-ins that look like a Teams-related third-party app, but they are in fact different applications hosted on different websites altogether. When a request to authorize a third-party Teams app is made, do pay attention to the detailed description of what that app does, and the section called “Permissions” which explicitly says what permissions to data it will require.

- Line of Business (LOB) applications — Some companies develop custom add-ins and applications to perform specific business processes in Teams. For example, Quest has created its own app for integrating internal Quest information to Teams.

By default, all Microsoft-provided, third-party and custom apps are available in Teams. As with external access, you may be tempted to disable all apps for the sake of security and compliance.

Again, though, Microsoft lets you balance Teams security while your users enjoy the benefits of collaboration in one place. Admins can turn apps on or off through Teams app policies, which can be set globally or per individual users.

Of the three types, third-party apps generally merit the most scrutiny of admins. An acceptable first step is to block all third-party apps and allow only certain ones that are well known to the enterprise or has been vetted by a Security Officer. Then, when a team needs an app at the team level, they can request it subject to a validation process in IT.

Securing files in Microsoft Teams

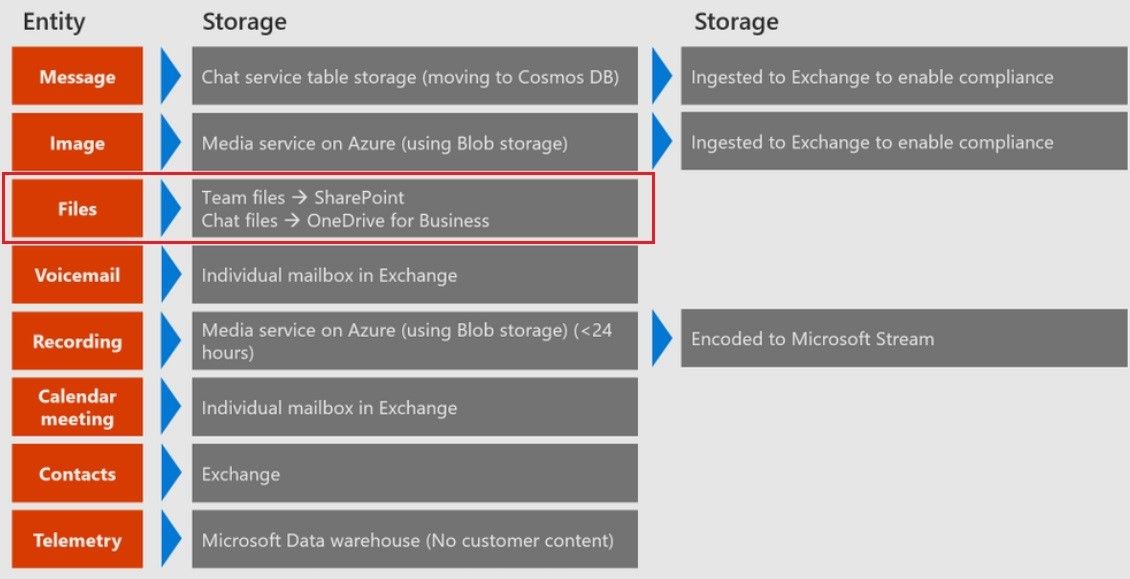

Security in Microsoft Teams extends to file storage. As shown in the diagram below, each entity in Teams maps to a storage location in Microsoft’s cloud.

The third row indicates that files used in Teams channels and chats go to the associated SharePoint site collection, and individual chat files go to the sender’s OneDrive for Business. A huge security benefit with Microsoft Teams is that it leverages all the built-in security of SharePoint and OneDrive for Business to secure this data. For example, external sharing of data in the channel of a team is governed by the external sharing controls on the associated SharePoint Site Collection.

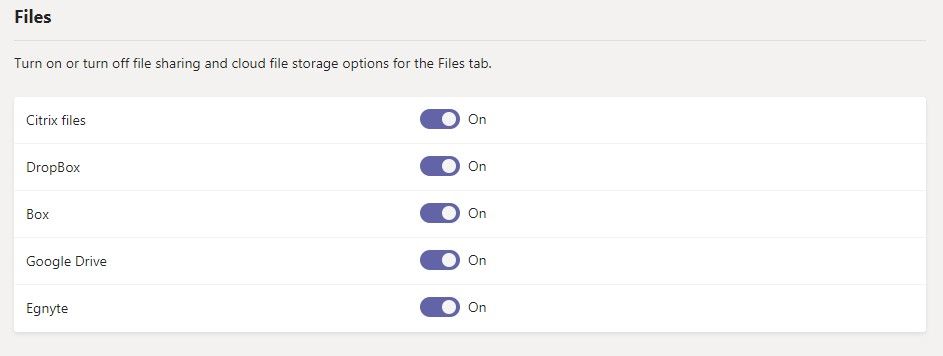

It is important to know that the Microsoft Teams service allows users to also store the content in Teams channels on third-party consumer storage providers like Google Drive, Box, and Dropbox. This capability is enabled by default. The settings to govern these capabilities are shown here in the Microsoft Teams Admin Center:

Enabling or disabling third-party storage should be part of an overall enterprise data security strategy, but if there is no compelling reason to store data outside of Microsoft 365, then SharePoint and OneDrive for Business are good choices from a security and governance perspective.

Managing roles and privileged admin access to Teams

Roles

Like other Microsoft 365 applications, Microsoft Teams administrative access is governed by Azure AD role-based access control (RBAC). Administrator user accounts have one or more of the following Teams-specific admin roles and associated access:

- Teams Service Administrator — Access to all management tools related to telephony, messaging, meetings and the teams themselves, and the ability to change settings, configuration and policies

- Teams Communications Administrator — Access to management tools for telephone number assignment, voice and meeting policies, and full access to the call analytics toolset

- Teams Communications Support Engineer — Access to the user call troubleshooting tools in the Microsoft Teams & Skype for Business admin center, with a view into call record information for all participants

- Teams Communications Support Specialist — Access to the user call troubleshooting tools in the Microsoft Teams & Skype for Business admin center, with a view into details for specific users only

It is very important to note that many of the privileged administrative roles at the Office 365 tenant level also have admin access to the Teams service. For example, if your organization has three Global Office 365 administrators, all of them inherit carte-blanche access to your Teams admin settings as well. Managing and securing Microsoft Teams means you should guard and tightly control the global administrator role — especially the Teams Service Administrator — in your organization. How do you do that? Once again securing the underlying Azure AD identity is the more important step because if a Teams Service Administrator account is compromised, the entire Teams service is compromised.

Common questions about Microsoft Teams security

Is Teams secure from a network perspective?

From a network perspective, the Teams cloud service has built-in defenses against common security threats to the Teams service, including network denial-of-service attacks, eavesdropping, spoofing, and man-in-the-middle attacks.

Microsoft Teams security relies on Transport Layer Security (TLS) and mutual TLS (MTLS) protocols to ensure that all communications are encrypted. Teams data, including messages, files, meetings, and other content, is encrypted in transit and at rest in Microsoft datacenters.

MTLS encrypts server-to-server traffic. TLS is used for client-to-server (like instant messages) and signaling. Media flows like audio and video sharing are encrypted by Secure Real-Time Transport Protocol (SRTP)/TLS.

Where are the most overlooked Microsoft Teams vulnerabilities?

As we have seen with email, many times the end-user is the most vulnerable piece in the chain of security. Email phishing attacks are increasing rapidly and becoming very sophisticated. While the number of phishing attacks in Microsoft Teams is far less, there have been breaches with malicious links being posted in Teams messages (private chats and channel messages). Likewise, the potential exists for malicious files to be uploaded and make their way into your Teams deployment and then onto end-user devices.

Just like Teams leverages other Microsoft 365 workloads to deliver a fantastic collaboration experience, other management tools in the Microsoft 365 Security and Compliance ecosystem can be used to further secure the Teams application itself.

Microsoft Advanced Threat Protection (now called Microsoft Defender for Office 365), provides Safe Attachment and Safe Links capabilities to guard against malicious files and malware. The base service plan (Plan 1) of ATP provides this capability and can be purchased as an add-on or is included in the full E5 license. There are also third-party management vendors that provide different layers of anti-phishing and anti-malware protection.

What is the importance of monitoring?

The value in monitoring is that it helps you understand what’s changing in your environment and can auto alert on anomalies.

Tools and technologies like Microsoft Teams are easy for you to deploy and for your co-workers to use, but that’s not where your job ends. When you create a baseline and monitor activity, you see the changes in who has access to what, who’s adding and removing data, and how that data is being used. In case of a security-related incident, monitoring with history makes it easier for you to trace backwards in time.

Does Microsoft Teams security extend to endpoint protection?

As we’ve described, Teams offers security as part of Microsoft’s greater Microsoft 365 and Azure suites. It also offers Enterprise Mobility + Security (EMS), which is another group of security features like Azure AD, Intune, multi-factor authentication and Endpoint Configuration Manager.

With Intune, a part of EMS, you can ensure that users have access to your Office 365 resources only from devices that meet the compliance criteria you specify. For example, you can exclude devices that are jailbroken or that have no anti-virus protection.

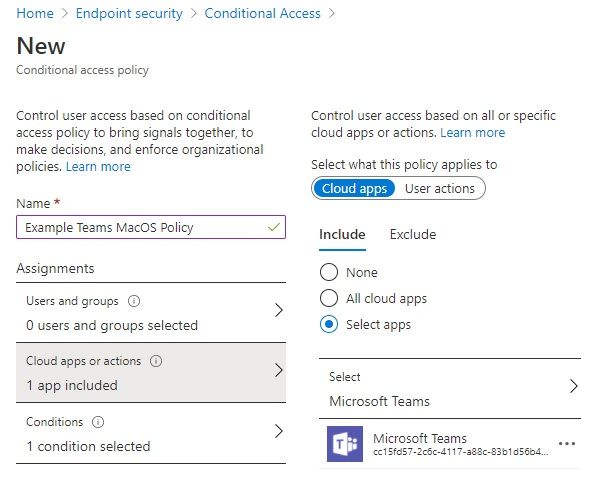

Teams also honors Azure Active Directory Conditional Access policies. For example, you can configure policies to restrict Teams app usage to devices that are Intune-compliant or joined to your domain.

Microsoft Defender for Identity (formerly Azure Advanced Threat Protection), also a part of EMS, extends to Teams the same advanced identity protection, and machine learning intelligence to protect from cyber-attacks.

Summary

As a growing share of the global workplace continues to shift to “work from anywhere” and Microsoft Teams is deployed and used by millions of users, it is important to understand all areas of Microsoft Teams security and configure it to meet the security and governance requirements for your organization.

This article was a primer and provides an overview of the major areas required to secure Microsoft Teams. Teams can be further secured by leveraging advanced Azure AD identity features, and peripheral Microsoft (or third-party) solutions such as endpoint security. These areas require separate expertise to configure accordingly.

The good news is, the big wins from a Microsoft Teams security perspective lie in the basics outlined in this article. There are plenty of additional materials available from Microsoft such as the top 12 tasks for security teams to support working from home that you can use as checklists to cover the basics.

Microsoft Teams has become critical to business continuity. Do what you can by securing Teams and maximizing user collaboration to ensure that continuity is not compromised.